![]() On June 2, 2014, we released our first Android application to the Google Play store. “Llama Detector” is a lifestyle app that gives end-users ability to detect the presence of llamas in social situations. It affords the end-user greater comfort in their daily interactions by allowing them to quietly and quickly detect hidden llamas wherever they may be. It does this using the your platform’s on-device camera hardware and peripherals. This amazing and technologically advanced application is guaranteed to provide end-users with seconds or even minutes of amusement. This posting serves as the end-user documentation and FAQ listing.

On June 2, 2014, we released our first Android application to the Google Play store. “Llama Detector” is a lifestyle app that gives end-users ability to detect the presence of llamas in social situations. It affords the end-user greater comfort in their daily interactions by allowing them to quietly and quickly detect hidden llamas wherever they may be. It does this using the your platform’s on-device camera hardware and peripherals. This amazing and technologically advanced application is guaranteed to provide end-users with seconds or even minutes of amusement. This posting serves as the end-user documentation and FAQ listing.

Usage

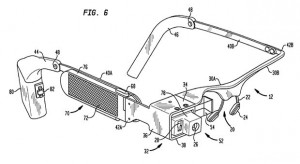

Using the Llama Detector is simple and straight forward. The application prioritizes use of the rear-facing camera on your device. If the device has no rear-facing camera then the front-facing camera is used. If the device has no camera then you will need to ask the supposed llama directly if it is a llama or spend a few minutes carefully examining the suspected area for llamas.

Upon launching the application, point the camera at the item or region that you suspect to be llama-infested. Depress the button labelled ‘Scan’ when you have successfully framed the area that needs to be analyzed. The image will be captured and the red scan line will traverse the screen and the detection process will begin.

If you decide against analysis after beginning the scan, for any reason, you may cancel the operation by depressing the ‘Cancel’ button. Otherwise, scanning will continue for approximately 10 seconds. After scanning, the Llama Detector will indicate if any llamas have been detected. Sometimes other items are detected and Llama Detector is able to indicate what it has identified.

If you would like to alter the detection sensitivity of the application, you may do so through the application preferences. Choose the preferences either through the soft button or menu bar. Then, display the Llama Sensitivity Filter. Enter an integer value between 1 and 1000 where 1000 is the highest sensitivity value (and 1 is lowest). This will alter the detection algorithm characteristics. A higher value will make the results more accurate with fewer false positives. The default value is 800.

FAQ

1. How much does this amazing application cost?

Llama Detector is an absolutely free download from the Google Play store.

2. Free? That’s crazy! How do you do that?

How do we do it? Volume.

3. What sort of personal information does Llama Detector collect?

Llama Detector collects no personal information and does not communicate with any external servers. It should be noted though that by downloading the application you have identified yourself as either a llama or a llama enthusiast.

4. I went to the zoo and used Llama Detector at the llama exhibit but it detected no llamas. Why is that?

Llamas are very difficult to hold in captivity. They tend to sneak out of their pens and hang out at the concession stands eating hot dogs and trying to pick-up women. For this reason, most zoos use camels or, in some cases, baby giraffes dressed up as llamas in the llama pens. The Llama Detector application can be used to indicate if a zoo is engaged in this sort of duplicity. For this reason, many zoos nationwide ban the use of Llama Detector within the confines of their property.

5. I used Llama Detector in my house and it detected a llama in my bathroom. Now I am afraid to use the bathroom. What do I do?

Llamas are quite agile and fleet of foot. It is important to note that detection of llamas should be run multiple times for surety. If the presence of a llama is verified, start making lettuce noises and slowly move to an open space. The llama will follow you to that space. Then stop making the lettuce noises. The llama will wonder where the lettuce went and start looking around the open space. Then quickly and silently proceed into the now llama-free bathroom.

6. When will the iOS version be available?

Our team of expert programmers are hard at work developing a native iOS version of this application so that iPhone user can enjoy the comfort and protection afforded by this new technology. The team is currently considering whether to wait for the release of iOS 8 to ensure a richer user experience.

7. I have another question but I don’t know what it is.

Feel free to post your questions to android at formidableengineeringconsultants dot com. If it’s a really good question, we’ll even answer it.